In the recent weeks, sporadically I have been working on QtWebKit to fix the missing blur support for Canvas and CSS shadow (see the tracking bug 34479). This brings some good memory; before I left Nokia, I was involved in prototyping the special effect stuff, both for the low-level (non-public) QPixmapFilter and the high-level QGraphicsEffect.

Usually blurring drop shadow for a shape is a very typical: grab the alpha channel of the shape, apply the blur filter, and finally tint it with the intended shadow color. The last step is trivial using the SourceIn composition mode. The blur filter is supposed to follow the SVG specification on feGaussianBlur. The said specification mentions one possible way to approximate the perfect Gaussian blur: three successive box blurs.

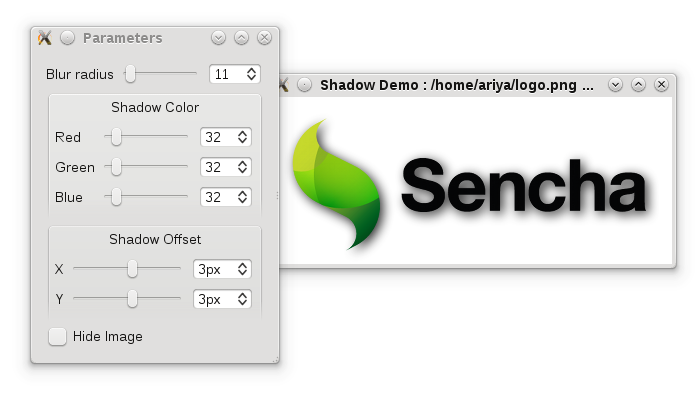

Since QPixmapFilter is private anyway and QGraphicsEffect is not suitable for this task, an attempt to implement what the specification outlines became my hobby for a few evenings. This is basically what emerges as the shadow blur implementation in QtWebKit. For the sake of code reuse, I pushed the implementation to the X2 repository under the graphics/shadowblur subdirectory. The shadowBlur() function itself is BSD licensed, there is a demo program included for your pleasure:

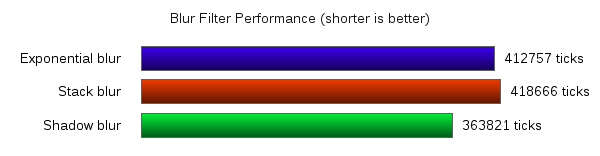

Performance-wise, the code is as satisfactory (for such a portable implementation). Another good approach is to use stack blur, adopted among others by AGG. KHTML notably uses stack blur for its Canvas shadow blur support. Exponential blur is known to be very fast, although quality-wise it deviates farther from a true Gaussian one. The portable, raster version of QGraphicsDropShadowEffect (via QPixmapFilter) is using this algorithm.

For this particular use-case, namely blurring the shadow (as opposed to generic blur filter), I’m surprised that the Gaussian blur approximation is not necessary slower than KHTML’s stack blur approach or even QGraphicsDropShadowEffect’s exponential blur. I did a quick benchmark, measuring the time spent creating the shadow for two images (horizontal 149×13 and vertical 13×149) for different radii (small 4px and medium 17px), on a Core i7 machine. The outcome is shown in the following bar chart. The result was from several runs, with the overall confidence level observed to make sure it was statistically sound. Still, take it with a pinch of salt.

With a very large blur radius, e.g. 50, stack blur performance deteriorates quickly, probably because the pre-loop initial setup. Exponential blur is pretty much radius-agnostic, although I can’t find cases where it wins against my shadow blur code (which is BTW only 60 lines). Larger source images would highlight the performance difference even more. Maybe I am doing something wrong, or maybe that’s how it is. In any case, I insert a low-priority entry in my (already infinite) TODO list: spend some quality evenings with callgrind and examine those blur implementations.

On mobile devices however the situation is reversed. The approximated Gaussian blur is consistently slower, around 10%, compared to exponential or stack blur, when tested on 600 MHz Cortex A8-powered Nokia N900. Due to slower memory speed and smaller cache, the repetitive memory access for the successive box blurs cancels its fast processing benefit. Hopefully the 1 GHz generation CPU (like in Nokia N8) and improved memory bus will eliminate this minor slow down.

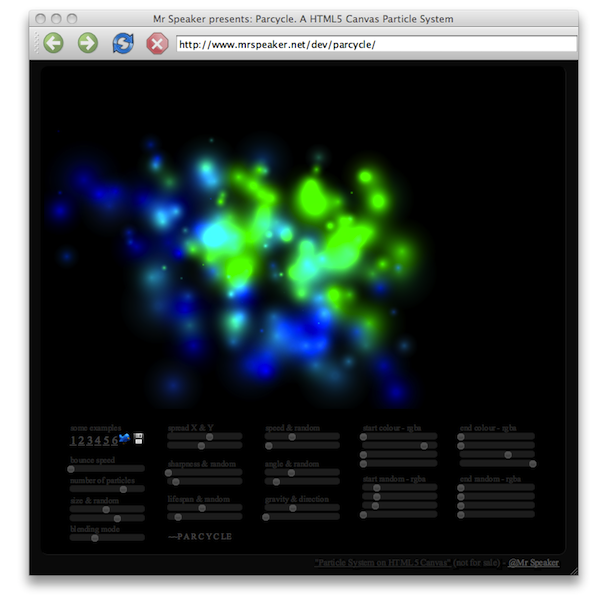

Anyway, in all cases, just like Andreas mentioned, now you can enjoy the fancy Parcycle demo in its full glory: